Data Cities: how post-internet technology is changing the way we design our world

In this extract from her recent book on the subject, Davina Jackson surveys how satellites and data-driven design tools are redefining what it means to manage our environment.

[This is an abridged extract from Davina Jackson’s book Data Cities: How satellites are transforming architecture and design (Lund Humphries 2019). Click here to purchase a copy from the Uro Publications bookshop]

Adelaide-born MIT professor William J (Bill) Mitchell was one of the world’s most influential advocates of computers in architecture and, while he remains largely unrecognised for it, was a progenitor of today’s “smart cities” movement. In his 1995 book City of Bits, he revealed vast implications for planners and designers from post-internet technology, predicted today’s smart roads, smart vehicles and smart sneakers – and forecast “a matrix of digital telecommunication systems and reorganised circulation and transportation patterns”. He set up MIT’s Smart Cities Group in 2003.

“From gesture sensors worn on our bodies to the worldwide infrastructure of communications satellites and long-distance fibre, the elements of the bitsphere will finally come together to form one densely interwoven system in which the kneebone is connected to the I-bahn,” he wrote.

During the decade after Mitchell catalysed the smart cities phenomenon, his vision was commandeered by global IT purveyors – notably Siemens, IBM, Cisco and Philips – to spruik to governments the (costly) benefits of installing internet-enabled telecoms and public lighting systems. They are still aggressively contesting government development budgets worth trillions. Cisco globally promotes its customer cities as “Smart+Connected Communities” and Philips exploited the world’s first “smart light city” festivals, led by light art engineer Mary-Anne Kyriakou in Sydney in 2009 and Singapore in 2010 and 2012, to catalyse a global “smart lighting” campaign, including further festivals in Amsterdam and other cities.

Early definitions of smart cities emphasised “intelligent” infrastructure – especially the buried cables and server security systems that are essential for so-called wireless communications and cloud computing. A second wave emphasised the ubiquity of big data – requiring secure storage in networks of data warehouses (which contain mostly processed information) and data lakes (which tend to be less expensive and much larger repositories of mainly unprocessed information). A third wave relies on flows of geodata, auto-detected by sensors and scanners and point-tagged with X-Y-Z location coordinates.

Ultimately every concept for a smart city depends on data flows through satellites – and glass fibre cables sunk across the oceans. Today’s advocates of data cities contend that intelligent planning decisions require analysis and visualisations of time-streamed, location-identified, statistics and observations. This evidence-based decision-making approach was galvanised by America’s SAGE (Semi-Automatic Ground Environment) project, set up in the late 1940s to use radar data and a cluster of mainframes to compute a real-time map of the world. Although this project did not meet many expectations of American defence officials, it allowed key researchers at IBM, MIT, Harvard and the University of Washington to test early methods of processing digital environmental data

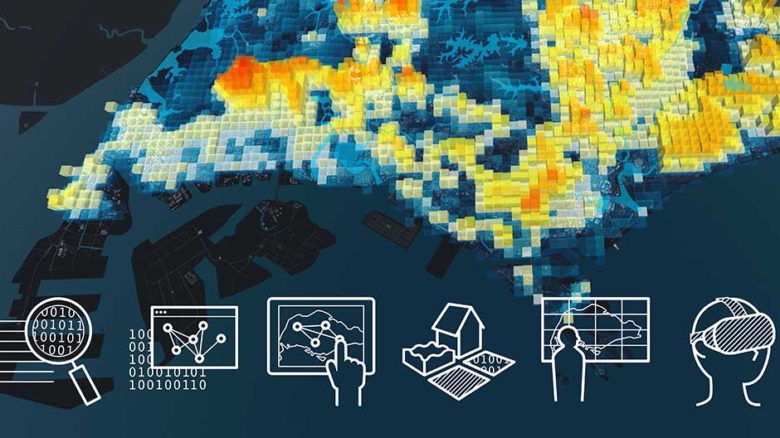

City Scanner obtains weather and air quality data from sensors fixed to garbage trucks. Image: SENSEable Cities Lab

Touch screens are used for urban planning workshops by CIVAL at the Singapore-ETH Centre. Image: CIVAL

Singapore Views, one of the graphic tools used to visualise data from Cooling Singapore. Image: Singapore-ETH Centre

In 1954, Howard Fisher and Betty Bishop pioneered the modern discipline of geographical information systems (GIS). The pair authored SYMAP (SYnagraphic MAPping) – the world’s first software program to graphically map land areas in greyscale by arranging and overlaying different typewriter symbols to plot terrestrial conditions. Carl Steinitz and his 1960s student Jack Dangermond, two of Fisher’s followers at Harvard’s Graduate School of Design, led the GIS domain via Steinitz’s professorial teaching and research, and Dangermond’s development of Esri’s ArcGIS mapping software (launched from SYMAP and now dominant among geographers and planners worldwide).

Since 2011, when Esri acquired the CityEngine 3D procedural simulations program from an ETH-Zurich spin-off company, Dangermond has countered the zooming, swirling platform of Google Earth by promoting Esri’s toolkit as the basis of a new environmental simulations movement that he named Geodesign. Dangermond wrote: “Geodesign represents the dawn of a new era of man’s relationship with the environment: the age of designing. As we move from exploiting geography through conserving geography to the new paradigm of designing geography, we are redefining what it means to be masters of our environment.”

Another early American data cataloguing and navigation project which remains influential was MIT’s 1976 Spatial Data Management System (SDMS) – immersing users in a room with a wall-sized colour display, two desktop screens, octophonic sound, and an Eames chair wired to the machines. Players could take armchair flights over a 2D Dataland; zooming and panning to click primitive graphical icons that could open to access electronic books, satellite maps, personal files, contact information and collections of picture and video clips. Two decades later, MIT Media Lab leader Nicholas Negroponte recalled that “SDMS was so far ahead of its time that a decade had to pass and personal computers had to be born before some of the concepts could move into practice […] What has changed is that today’s Datalands are not spread floor-to-ceiling, wall-to-wall. Instead they are accordioned into windows.”

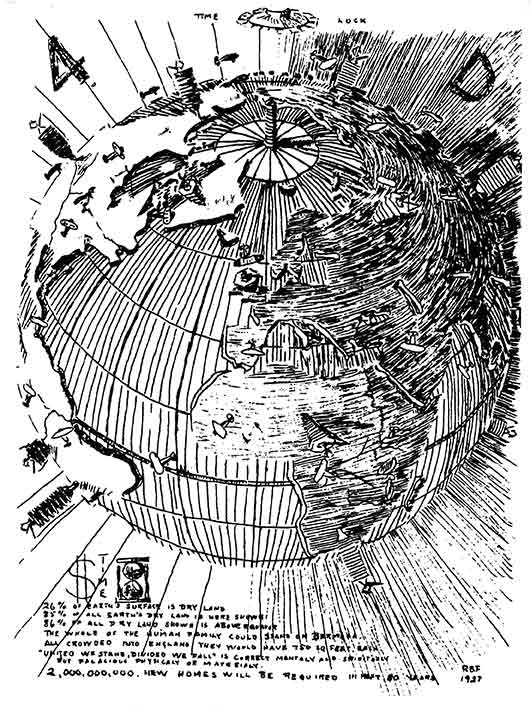

Ten years before the first electronic computer and two decades before the first Earth observation photograph, Richard Buckminster Fuller published his 1927 diagram proposing a ‘4D Air-Ocean World Town Plan’. Image: John Ferry/The Estate of R. Buckminster Fuller

Ten years before the first electronic computer and two decades before the first Earth observation photograph, Richard Buckminster Fuller published his 1927 diagram proposing a ‘4D Air-Ocean World Town Plan’. Image: John Ferry/The Estate of R. Buckminster Fuller

Although the physical-virtual domain of Dataland morphed into the graphical user interfaces of small computers, the full-scale experience of hybrid reality remains desirable for designing and performance-testing buildings and cities. Swiss university ETH’s Future Cities Laboratory has established several labs for collaborative urban development communications, including remote teaching and online conferencing. These are large rooms on the Zurich and Singapore campuses, each fitted with a touch-screen video wall, a trio of large screens with touch overlays, various mobile and surface (tabletop) computing screens and sophisticated video conferencing equipment. ETH and other futuristic universities also have immersive VR caves with multiple projection walls, control interfaces and devices for measuring users’ physiologies and behaviours.

These resources were valuable for the Singapore ETH Centre’s Cooling Singapore project, to compile and visualise data to help identify and solve the heat island effect across the island nation. The founder of the Future Cities Lab, Gerhard Schmitt, noted that “big data-informed urban design aims to extend the smart city concept to strengthen the human dimension and to move the citizen to the centre by incorporating a wider and more complex set of urban governance, planning and design concerns.”

Since the heyday of Mitchell and Negroponte, a cluster of younger MIT professors have demonstrated astonishing urban design innovations exploiting electronically mediated interplays of light, energy and data. At the Venice Biennale of Architecture in 2006, Carlo Ratti’s SENSEable Cities Lab dramatically presented dynamic mapping of pedestrians carrying mobile phones around the streets of Rome, with crescendos of streaming data for a major soccer match and a Madonna concert at the stadium. This energetic display of Real-Time Rome caused a new sensation among architects wearied by the discipline’s history of designing mostly static structures.

More recently, Ratti’s team worked with the City of Cambridge, Massachusetts, on the City Scanner project to obtain weather and air quality data for different precincts, from sensors fixed to garbage trucks. Joseph Paradiso’s Responsive Environments group revealed in 2011 probably the world’s first video simulation of invisible atmospheric dynamics inside a building – using temperature, humidity, light, sound, human movement and other data, streaming from RFID and other sensors around several floor levels of the MediaLab. Its network of sensors (originally developed for space missions) recorded how interiors invisibly pulsate.

Christoph Reinhart’s Sustainable Design Lab developed an urban modelling interface (umi) program which evaluates key environmental performances of neighbourhoods and cities. First the area being studied is architecturally modelled in Rhino 3D, then the model is analysed for walkability, daylighting and several types of energy consumption. More extraordinary advances with urban simulations are being developed via the CityScope research project in Kent Larson’s City Science group. CityScope favours quick physical modelling of built environments using colour-coded Lego bricks, which are sensor-tagged and plotted on screens as “data units”. In precinct modelling for several international cities, Larson and students rapidly moved around their data bricks to reveal ways to improve the density, proximity to services, and demographic diversity (vibrancy) of each area.

Futurists rapidly tire of buzzwords – even neologisms they have invented or popularised personally –once they become hackneyed in the mainstream. Larson now is “looking beyond smart cities” – as is Tim Campbell, an international urban development strategist who worked with the World Bank during the 1990s. In Beyond Smart Cities (2012), Campbell suggested that ‘clouds of trust’—networks of key actors in any community—provide the best platform for urban communities to continue to develop. He also suggested four essential factors for a learning city: gathering knowledge, fostering a milieu of trust, building institutional processes to support learning, and recording knowledge for future generations.

Britain’s Royal Geographical Society is also looking beyond smart cities to consider how they are enmeshed with human “systems”. A digital geographies session at its 2018 Cardiff conference debated how technology is being applied and how it is changing lives and societies. Oxford University convenors Gillian Rose and Oliver Zanetti highlighted various unfamiliar questions, including: What diverse things compose the “smartness” of a city? What are the various forms of social agency that are enacted through smart activities? How do specific smart entities co- and re-constitute forms of social difference both familiar and new? What happens when something smart that has been designed in one place, lands in another?

Answering these queries seems impossible without rapid access to reliable, current and comprehensive data. That challenge is beginning to be tackled. In 2014, the International Organization for Standardization (ISO) launched its first suite of indicators to measure and compare the performances of cities across 17 general themes, including the economy, energy, governance, health, telecommunications and innovation, transport, waste and water. Developed by the Global City Indicators Facility (GCIF) at the University of Toronto and promoted by the related World Council on City Data (both founded by Patricia McCarney), the ISO 37120 standard uses some criteria that seem debatable – for example, defining “fire and emergency response” performances by the number of deaths per capita from arson and natural disasters. But it has been accepted widely as a valuable initial platform for globally exchanging data about cities – and is beginning to be illustrated online with maps and graphs that may become useful for public participants in urban development debates.

University College London (UCL) is one hotbed of urban data simulations. Its Bartlett Centre for Advanced Spatial Analysis (CASA created the Virtual London and Google Earth London visualisations and many time-flow data maps showing aircraft traffic, potential floods, bicycle use, histories and characteristics of buildings, and demographics in different neighbourhoods. Its founder, Michael Batty, was educated in Manchester during Britain’s 1960s heyday of modernist town planning and is a mathematician competent with the science of complex systems. As one of the world’s eminent professors of analytics, he can scientifically explain a view shared by many architects on their sites –that the bureaucratic processes for planning cities are a wicked problem.

“Wicked problems fight back and they resist solution,” Batty wrote. “Wicked problems are unique and have no definitive formulation. Often the problem and the solution are the same thing, they have no stopping rule, it is hard to tell when a solution has been reached, there is no agreement about a solution, and no ultimate test that establishes whether a solution is optimal or has actually ever been reached.”

Some veteran architecture professors (educated before mobile telephony) enjoy challenging tech-savvy millennials with a question first posed by Cedric Price during 1960s and 1970s lectures in London. “Technology is the answer,” Price said. “But what was the question?”

This sceptical witticism ignored many questions and challenges that still worry boffins – 90 years after Bucky Fuller first published his visionary 4D Air-Ocean World Town Plan in 1928 (when computers were all people). One response now might be to pose another question. If bureaucracy – indeed human behaviour generally – remains an intractable problem, how could technology effectively deliver solutions? Many computers – human and electromagnetic – are calculating that quandary.

–

Davina Jackson is a Sydney-based author, editor and curator, and an honorary academic with the University of Kent School of Architecture.

This is an abridged extract from Jackson’s book Data Cities: How satellites are transforming architecture and design (Lund Humphries 2019). Click here to purchase a copy from the Uro Publications bookshop